The Musical Avatar

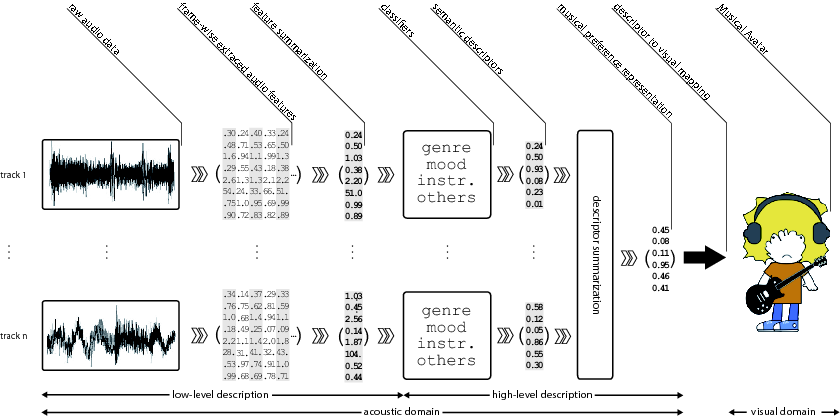

In this project we address visualization of musical preferences of a listener by inferring their semantic representation from audio and creating a humanoid cartoon-like character – the Musical Avatar - based on it. The visualization is generated from a collection of music tracks provided by a user as an example of her/his musical preferences. We automatically compute different acoustic features and apply pattern recognition methods to infer a set of semantic descriptors for each track in the collection. Next, we summarize these track-level semantic descriptors, obtaining a user profile. Finally, we map this collection-wise description to the visual domain by creating a humanoid cartoony character that represents the user’s musical preferences.

These are some examples of Musical Avatars:

![]()